Let's cut through the hype. When you hear "artificial intelligence," you might picture sci-fi robots or supercomputers plotting world domination. The reality is far more ordinary, and honestly, more useful. In the simplest terms, artificial intelligence (AI) is a branch of computer science focused on creating machines or software that can perform tasks typically requiring human intelligence. Think learning, reasoning, problem-solving, understanding language, and recognizing patterns.

I've been working with and writing about this stuff for over a decade. The biggest mistake beginners make is thinking AI is a single, magical thing. It's not. It's a toolbox of techniques that make machines smart in specific, limited ways. Your email spam filter that learns what you mark as junk? That's AI. The map app that finds the fastest route around traffic? That's AI. The streaming service suggesting your next show? Yep, that's AI too.

It's already woven into your daily life. The goal here isn't to turn you into a programmer, but to give you a clear mental model so the next time you read a headline about AI, you can think, "Ah, I know what they're *actually* talking about."

Your Quick AI Navigation

The Core Idea Behind AI

Strip away all the complexity, and AI is about one thing: automating intelligent behavior. Humans are great at adapting. We see a new type of dog and still know it's a dog. We hear a sentence with a word missing and fill in the blank. We learn from a single mistake. Traditional software can't do that—it follows rigid, pre-written instructions.

AI tries to bridge that gap. Instead of telling a computer exactly what to do in every situation, we give it a goal and a method to learn from data or experience. The classic example is a chess program. You don't code every possible move. You give it the rules and a way to evaluate board positions, and it learns (through practice or data) which strategies lead to victory.

The Simple Analogy: Imagine teaching a child to identify animals. You don't give them a biology textbook. You show them many pictures, saying "this is a cat," "this is a dog." Their brain finds patterns—ears, fur, size—and builds a model. AI, particularly a subset called machine learning, works similarly. We feed it vast amounts of data (the pictures) and correct answers (the labels), and it builds its own statistical model for making future identifications.

How Does AI Actually Work? It's Not Magic

Most modern AI, the kind making headlines, is powered by machine learning (ML). Here’s a non-technical breakdown of the process:

- Data In: You start with a massive dataset. For a translation AI, this is millions of sentence pairs in English and Spanish. For a fraud detection AI, it's millions of labeled transactions ("fraudulent" or "legitimate"). Garbage in, garbage out—this step is crucial.

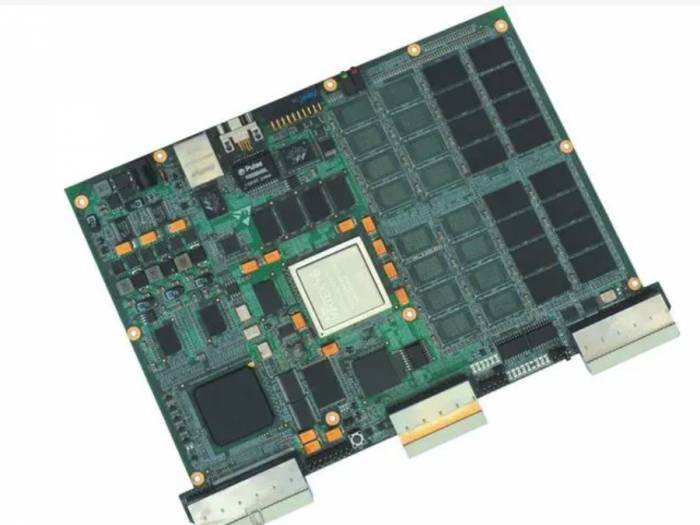

- Training: An algorithm (a set of mathematical procedures) chews through the data. It's essentially making guesses, checking them against the known answers, and adjusting thousands of internal numerical "knobs" to reduce error. This is the "learning" phase. It can take huge amounts of computing power and time.

- The Model: The end product of training is a "model." This isn't code in the traditional sense; it's a complex mathematical function—a network of those adjusted knobs—that has learned the patterns in the data. It's a pattern-matching machine.

- Prediction/Inference: You deploy the model. Now, when you give it new, unseen data (a new Spanish sentence, a new credit card transaction), it uses its learned function to produce an output (an English translation, a fraud score).

A specific, powerful type of ML is a neural network, loosely inspired by the brain's network of neurons. They have layers that process information hierarchically, which makes them exceptionally good at tasks like image and speech recognition. When people talk about "deep learning," they mean neural networks with many of these layers.

The 4 Main Types of AI (From Simple to Sci-Fi)

Not all AI is created equal. Researchers often categorize AI into types based on capability. This table clarifies the spectrum, from what exists today to what remains theoretical.

| Type of AI | Core Capability | Real-World Example | Status |

|---|---|---|---|

| 1. Reactive Machines | Responds to specific inputs with specific outputs. Has no memory or ability to learn from past experiences. | IBM's Deep Blue chess computer (1997). It analyzed board positions but didn't learn from past games. | Widely Existent |

| 2. Limited Memory | Can use past experiences (data) to inform future decisions. This is where most modern AI lives. | Self-driving cars that observe other cars' speed and direction to navigate. Large Language Models (LLMs) like ChatGPT that predict text based on patterns in training data. | State of the Art (Narrow AI) |

| 3. Theory of Mind | A hypothetical AI that understands that others have their own beliefs, intentions, and emotions. Could engage in true social interaction. | None exist. Advanced social robots or AI companions that truly understand human feelings are a distant goal. | Purely Theoretical / Research |

| 4. Self-Aware AI | AI with consciousness, self-awareness, and its own desires. This is the sentient AI of science fiction. | Fictional entities like HAL 9000 or Data from Star Trek. Raises immense philosophical and ethical questions. | Science Fiction |

Key takeaway: When you interact with AI today—Siri, Netflix, a chatbot—you are interacting with Limited Memory AI, often called Narrow or Weak AI. It's brilliant at its one specific task but has no general understanding of the world. The jump to Theory of Mind or Self-Awareness is not just a technical hurdle; we don't even fully understand how to define or measure consciousness in machines.

AI in Action: Real-World Examples You Use Daily

To move from abstract to concrete, let's map AI to your everyday life. This isn't a future promise; it's your Tuesday.

- Communication: Your smartphone's keyboard suggests your next word (predictive text). Gmail finishes your sentences (Smart Compose). Zoom cancels your background noise.

- Entertainment: Spotify's "Discover Weekly" analyzes your listening history to find new music. TikTok's "For You" page is a masterclass in AI-driven content recommendation.

- Commerce: Amazon's product recommendations. Dynamic pricing on flights and ride-shares. The fraud alert from your bank.

- Health & Wellness: Fitness trackers analyzing your sleep patterns. Apple Watch's ECG and fall detection. AI-assisted review of medical scans (like X-rays) to help radiologists spot anomalies faster.

- Creativity: Tools like DALL-E or Midjourney generating images from text prompts. AI-assisted editing in Photoshop removing objects from photos.

Notice a pattern? The AI isn't "thinking." It's pattern-matching at a scale and speed impossible for humans. The recommendation engine doesn't "know" you'll like that song; it calculates that people with a listening history 98% similar to yours also liked it.

Busting Common AI Myths & Misconceptions

Here’s where my decade in the field lets me point out subtle errors that most introductory guides gloss over.

Myth 1: AI is inherently objective. This is dangerously wrong. AI models learn from data created by humans. If that data contains societal biases (e.g., historical hiring data favoring one group over another), the AI will learn and amplify those biases. An AI resume screener trained on biased data will perpetuate discrimination. The model isn't racist; it's a mirror reflecting our flawed data.

Myth 2: AI understands content like a human does. A large language model like ChatGPT generates stunningly coherent text. But it doesn't "understand" truth, logic, or facts in the human sense. It predicts the most statistically likely next word based on its training. This is why it can sometimes "hallucinate"—confidently state complete falsehoods. It's a stochastic parrot, not a reasoning entity.

Myth 3: Strong, human-like AI is just around the corner. Media hype cycles suggest Artificial General Intelligence (AGI)—an AI that can perform any intellectual task a human can—is imminent. Most experts in the field, like those contributing to the Stanford University's One Hundred Year Study on Artificial Intelligence, believe AGI is decades away, if it's achievable at all. The challenges aren't just about more computing power; they're fundamental gaps in our understanding of cognition.

Where Is AI Headed? A Realistic Look Ahead

Forget the robot overlords. The near-term future of AI is less about creating new species of intelligence and more about integration and specialization.

We'll see more AI copilots embedded in every professional tool—helping programmers write code, scientists analyze data, marketers draft copy. The goal is augmentation, not replacement. Think of it as having a super-powered, tireless research assistant who is brilliant at finding patterns but still needs you to ask the right questions and judge the output.

Another trend is making AI smaller and more efficient to run directly on devices ("edge AI"), improving speed and privacy. Your phone already does this for facial recognition.

The real frontier is grappling with the ethical and societal impacts: job market shifts, misinformation at scale (deepfakes), and the concentration of power in the hands of a few companies that control the most advanced models. A report by McKinsey Global Institute consistently highlights that the biggest economic impact of AI will come from transforming business processes, not from creating sentient beings.

Your Burning AI Questions, Answered

So, what is artificial intelligence in simple terms? It's a set of tools that allow computers to perform specific intelligent tasks by learning from data. It's not magic, it's not sentient, and it's not a single entity. It's a transformative technology that's already here, working quietly in the background of your life. Understanding its basics—its capabilities, its limitations, and its very human flaws—is no longer just for techies. It's essential for navigating the world we're building.